Post authored by Maria Shaldybin, an Engineer on the Diego Team at VMware.

What is container-to-container networking?

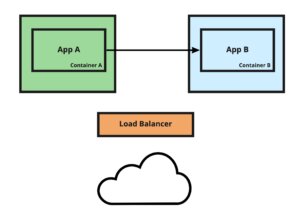

To achieve better flexibility and scalability in today’s world, a large number of applications are developed using the microservice architecture. Cloud Foundry provides all the necessary tools for developers to easily deploy and manage their microservices. In a typical architecture, each microservice is deployed as a separate application container. For secure communication over an internal network, containers can use container-to-container networking.

In Cloud Foundry application, containers cannot talk to each other over an internal network by default. To enable that you can create a network policy that will allow applications to talk to each other.

How to set up container-to-container networking

To enable direct communication from app A to app B, you can create a network policy that will allow the traffic from app A to app B.

cf add-network-policy app-A –destination-app app-B –protocol tcp –port 8080 |

Now your app A can talk to app B on port 8080:

cf ssh app-A curl http://app-B.apps.internal:8080 |

To use port 8080, unproxied port mappings must be enabled in your Cloud Foundry deployment. This might pose a security risk since every container exposes an application port, and it becomes the responsibility of the application developer to terminate the SSL traffic.

Using platform-enabled HTTPS for container-to-container networking

To secure communication between application containers, we introduced a special port 61443 that will serve HTTPS for container-to-container networking.

Now you can create a network policy for that port:

| cf add-network-policy app-A –destination-app app-B –protocol tcp –port 61443 |

And your app-A can talk to app-B on port 61443 over HTTPS:

cf ssh app-A curl https://app-B.apps.internal:61443 |

What is happening behind the scenes?

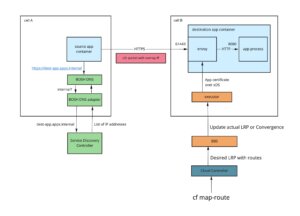

Every application container that is hosted on the Linux operating system in Cloud Foundry is running Envoy, which proxies all incoming traffic to the application process. Envoy listens on several ports (starting with 61001 port) to serve different traffic to the application, including all specified application ports, SSH port and now port 61443 reserved for HTTPS container-to-container communication.

TLS is terminated by Envoy on port 61443 and then HTTP traffic is proxied to port 8080 inside the container. That means that the server application continues to serve HTTP on port 8080 and doesn’t worry about TLS termination.

For port 61443 Envoy is serving a TLS certificate that includes all application internal routes in its SAN. So now clients can validate the server they intended to talk to.

| Note: Container-to-container networking is not available for apps hosted on Windows. |

Dynamic certificate generation

Historically, the map-route and unmap-route commands magically changed the route for the application, without the need for the restart. We want to preserve this behavior. Whenever an internal route is mapped or unmapped, Cloud Foundry will dynamically update the TLS certificate for the container-to-container networking communication without the need for an application restart.

Additional resources

- Container-to-Container Networking: Cloud Foundry Documentation

- Configuring Container-to-Container Networking: Cloud Foundry Documentation

- A Practical Guide to Microservice Routing

- Context Path Routing for Creating and Managing Multiple Microservices

Ready to try this new feature? It shall be available in the Diego release, starting with v2.59.0. We will be happy to hear your feedback in our Diego slack channel!