(This post assumes you’ve read the introductory blog post.)

While Cloud Foundry is the world’s premier developer platform, we have heard from customers how we can give them even better control and security, and help them integrate Cloud Foundry into their current and new environments. In this post, we’ll talk about some common pain points and how Cloud Foundry is evolving to give organizations better control and smoother adoption on their own terms.

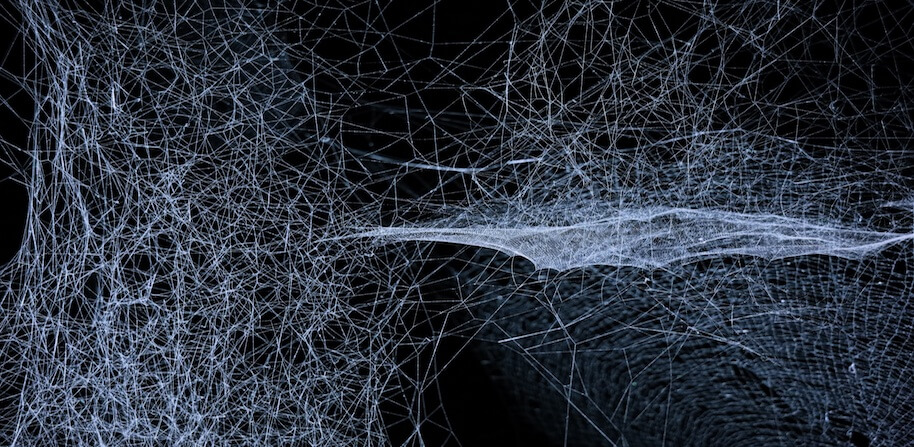

To summarize: the network is becoming application-aware and we’re embracing software-defined networking to help developers, DBAs, and security engineers manage and secure their CF networks in new, flexible ways.

Here’s what we’ll

Your DBA Feels Exposed (“I want to apply least-privilege access to my database.”)

When we talk to customers, the first thing we hear from many of their network and database teams is: “I don’t want to whitelist the entire Cloud Foundry subnet range to [My-Sensitive-Database]! I want to grant access to applications on a case-by-case basis.” Security administrators applying the principle of least privilege need to constrain network access to the applications that require it, and no more.

Today, because container IP addresses are ephemeral, network security administrators must permit the entire Cloud Foundry subnet access to external dependencies. This is a blunt instrument at best; all traffic from all CF-hosted applications must be permitted through to the database, warehouse, or other user-provided service. DBAs and admins want to apply “least privilege” but IP addresses are ephemeral, so per-application firewall rules are impossible.

Application Security Groups (ASG) help, but only control outbound access. ASGs are not application-specific and cannot be used to manage permissions for a specific CF-hosted application. They must also be kept up to date if the remote IP address of your external database changes.

Read also: Using Example Domains and IP Addresses for Safe Documentation

Your Security Engineer’s Lamentations

A related customer need is traffic identification by application (or even down to application instance), often for forensics. Incident response teams tell us, “I need to know which application instance generated this suspicious-looking traffic to [My-Sensitive-Database]!’ Security personnel may need to identify and isolate a compromised component in a hurry, and trying to map ephemeral ports and IP addresses back to a specific container is difficult today.

Security admins also regularly flag the mandatory out-and-back-in traffic flow between microservices within Cloud Foundry: “I don’t want my internal microservice traffic egressing onto the public VLAN to reach another microservice within the platform. I want to skip the GoRouter hop.”

With the advent of the new batteries-included container networking stack in future Cloud Foundry releases, we’re solving these problems in an inventive new way. Not only are these problems a thing of the past, but security administrators will enjoy a new level of transparency in Cloud Foundry network security unlike any other platform in the market today.

We’re Bringing Transparency to Cloud Foundry Networking

With container networking, we’ve moved to an IP-address-per-container as our fundamental building block, and in our “batteries included” release, all application instances live in a single, global overlay network, effectively creating a separate “data network plane.” All traffic within the application network can be uniquely matched to the originating application instance. Tracing back suspicious activity while investigating a compromise is suddenly trivial — just look in the packet capture for the real IP address!

This direct addressability also solves the “traffic hairpinning” problem. Since instances can now reach other instances directly, we no longer require GoRouter to be in the path for microservice-to-microservice communications. (It also has the benefit of enabling arbitrary protocols on the wire — but that’s for another time.) The trick of course is to enable convenient authorization management, so that system and security administrators can exert control over their microservices topology.

Granular Control

To provide application identity, we encode the source application identifier in every network packet so that Cloud Foundry can filter all network connections by application at source and destination. This enables security-conscious developers and admins to control what applications are accessing other applications.

We’ve added access policy options to the CF CLI to manage rules for application network connections. With this facility, security operators can begin to move away from whitelisting the entire physical CF range, and instead express intent in terms of applications and endpoints, such as, “AppA can only talk to AppB, and nothing else.” In the future, we’ll add external endpoints, like “AppB can talk to [My-Sensitive-Database], but nothing else can.” (If you squint, you can see this obsoleting ASGs in the not-too-distant future, as we move to a flexible logical topology, and away from static physical rules. More on this in a moment.)

Today in the “NetMan” OSS project for container networking, you can express app-to-app policies in the CLI and see them enforced in real time. We’ll include this filtering process with BOSH-deployed services in the near future, bringing those services into the “service graph”, so administrators and developers can declare network authorization rules (akin to tuples) to services like MySQL, RabbitMQ, Redis and others.

Eventually Cloud Foundry will “modularize” that filtering process and place it at the “edge” of the Cloud Foundry network. This feature is a critical new capability for security and compliance. Cloud Foundry will be able to deploy a filtering proxy per app, per classification (e.g., for PCI DSS, one could have “CDE” and “non-CDE”), or other arbitrary groupings. The control point moves off the traditional static 5-tuple firewall and into the intelligent application-aware process. For some, this change will take some getting used to. The IT compliance team that is familiar with verifying security enforcement based upon a report of static IP addresses will need to adopt a new approach to gathering the evidence they need to prove compliance. But rather than lament the disappearance of static IP address verification, the operators and operators can embrace a real-time, dynamic model of their logical system, not a brittle physical snapshot. CF will give administrators (and their auditors) a clear point of enforcement with the richness of Cloud Foundry’s application-centric workflows.

Mitigating a Hostile Environment

Every security admin knows that every network is hostile and possibly compromised in some way. This new container network helps blunt some common “peripheral” threats, too.

Now that application traffic is encoded with packet identifiers and transmitted within a separate overlay network, every single host filters unwanted inbound traffic. Arbitrary external traffic won’t be encoded as described in the policy language and therefore won’t be routed into the overlay network by default, so it will be dropped.

Similarly, should an application be compromised or otherwise become suspect, deauthorizing (and thereby blocking) the network traffic in the new model is as simple as issuing a disallow-access command on CF. Additionally, since packets are now tagged by application with true IP addresses, incident responders and forensics teams can trace network activity directly down to the application instance.

Your CIO can’t just transition wholesale. “My firewalls aren’t going anywhere!”

Finally, some organizations face the reality that they must continue to enforce policy at physical points like firewalls. For all the transparency and inbuilt filtering coming up in CF, some audit and compliance regimes will continue to live in the “five-tuple” world. We’ve been thinking about this problem, too.

To apply least-privilege, network/firewall administrators want a static source IP per application to “whitelist” at the perimeter. For example, a firewall admin may want to express, “Application A is permitted from source IP 1.2.3.4 to the database endpoint on tcp/3306”.)

We haven’t fleshed out this capability yet, and we’d like your feedback, via the Cloud Foundry #container-networking Slack channel or through Github issues or features.

- Would you want a capability to assign a static source IP to application traffic when it egresses the CF perimeter?

- Would this be on demand? (Automatically assigning a static IP to every application is wasteful…?)

- Could IP addresses be reused once reclaimed? (How would the firewall be updated?)

- Should that source IP be picked from a pool, or specified on the command line/API?

- If you don’t like 5-tuple, what would you prefer? NetFlow configurations? Leave the encapsulation with application identifiers intact and let you decode it?

A Note About Internet Access/Exfiltration

Future work may extend to requests originating within Cloud Foundry to the external internet. Today this is largely unfettered access (typically through a NAT gateway), but we envision “external endpoints” (Internet, intranet, FQDN, etc) eventually being woven into the logical topology of a CF application. We’d welcome feedback on this projected, possible feature.